Studies screened in the latest systematic review

Research Report

The Case for Oral Assessment

in Higher Education

Evidence, innovation and institutional imperatives for university decision-makers.

AI-generated exams that went undetected

Students self-reporting contract cheating

Years of oral assessment tradition

Three questions define every assessment.

AI has changed which ones still matter.

AI generated work passes detection in most cases. Authorship is no longer a reliable signal.

AI produces structured, plausible answers by default. Correctness no longer proves understanding.

Understanding is revealed through explanation. It can only be tested in real time.

AI has commoditised the first two. Only the third still reveals understanding.Oral assessment is how you access it.

Academic Integrity

AI-generated exam answers went undetected in 32 of 33 cases.

A 2024 University of Reading study submitted AI-generated answers alongside genuine student work. Markers identified just one.

Detection tools measure what has already been produced. They cannot verify how it was created.

Oral assessment changes the question entirely. Instead of asking “Who wrote this?”, it asks “Do you understand it?”

Students self-report contract cheating

Estimated impact on UK degrees

Deeper Learning

Performance improves when assessment requires explanation, not just production.

A 2024 systematic review found that students perform better in oral assessments than written ones, with outcomes influenced by the quality of design and implementation.

Oral formats require real-time retrieval, explanation and defence — rather than the rehearsed, polished written responses that can mask shallow understanding.

Explaining ideas under questioning strengthens retention and supports the kind of flexible, transferable understanding that higher education aims to develop.

Oral assessment range Higher-order thinking

Written assessment range Lower-order recall

Graduate Readiness

Oral assessment develops the competencies employers actually hire for.

Employer surveys consistently identify oral communication, critical reasoning and the capacity to explain complex ideas under pressure as highly valued graduate competencies.

Assessment regimes dominated by coursework and written examination provide limited opportunity for students to develop and demonstrate those capacities in forms that resemble professional practice.

Oral assessment addresses that misalignment by requiring students to articulate reasoning, respond to challenge and communicate understanding in real time.

The competencies evaluated in graduate employment are precisely those oral assessment is well placed to cultivate.

Rigour & Reliability

Reliability comes from assessment design, not the format alone.

This report snapshot isolates the central finding from the literature: oral assessment becomes reliable when the surrounding conditions are structured.

Across the evidence base, validity and acceptability rise when sessions are guided by defined questions, shared rubrics and calibrated examiners.

The implication is narrow but important. Poorly designed oral assessment produces variable outcomes. Well-designed oral assessment produces defensible ones.

Snapshot: consistency is created by structure, not by format alone.

- Inconsistent outcomes

- Harder to defend

- Stable judgement

- Defensible grading

The format is not the control. The design is.

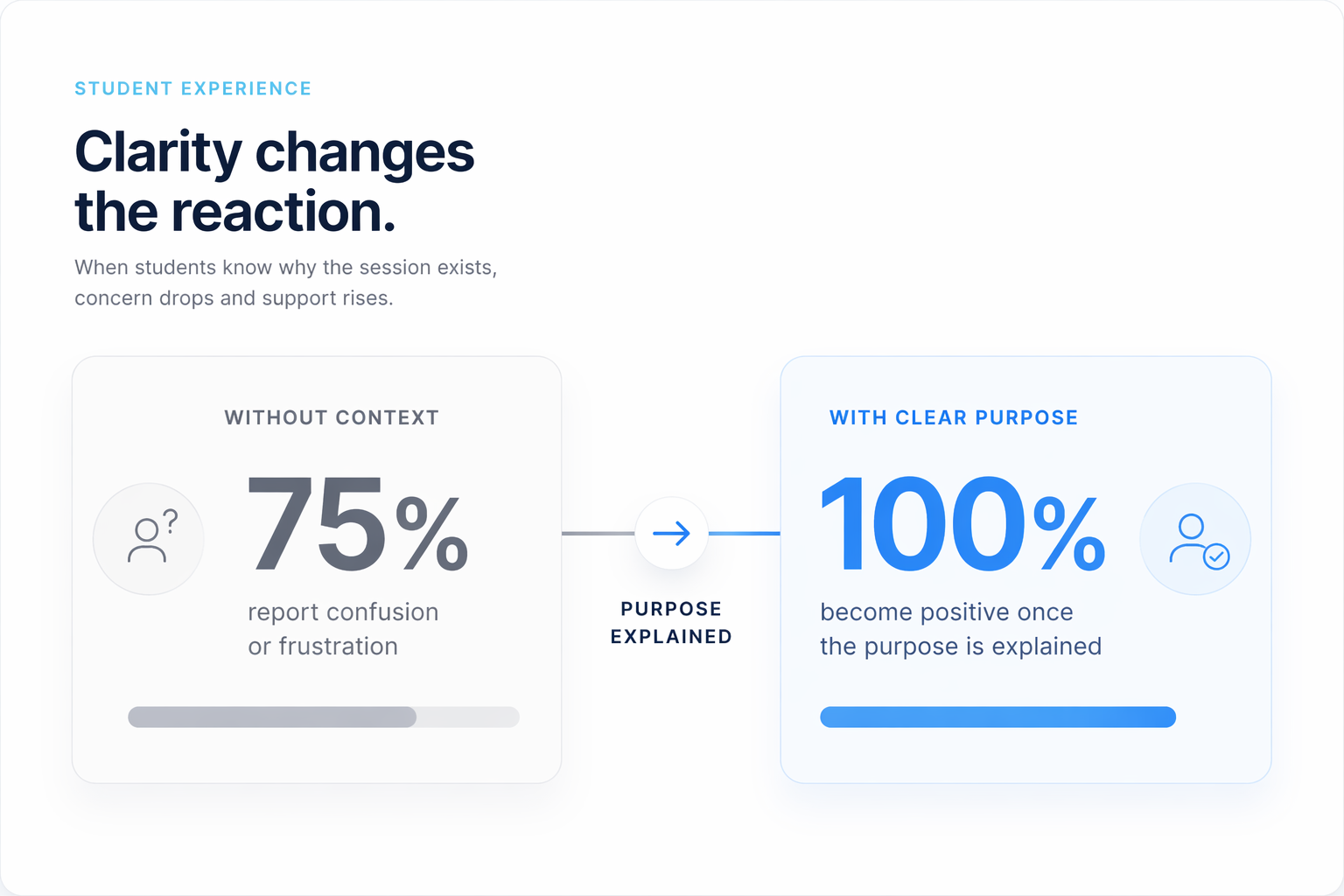

Student Experience

Frame it clearly, and scepticism becomes buy-in.

The reaction changes fastest when the format is explained upfront. Students who arrive without context often read the viva as a trap or a hidden grading mechanism.

Once the purpose is made explicit, checking understanding rather than catching them out, the same process is described as fair, useful and relevant to their own work.

The critical variable is communication, not the format.

Students also point to a second gain: defending their thinking out loud feels closer to the professional situations they expect beyond university.

Academic integrity is shifting from detection to design.

Detection-based integrity

- Reactive — identifies issues after the fact

- Dependent on text-matching and detection tools

- Locked in an arms race with generative AI

- Creates an adversarial relationship with students

- Structurally disadvantaged as AI improves

Integrity by design

- Proactive — reduces the opportunity for dishonesty

- Requires live demonstration of understanding

- Resistant to AI-generated responses

- Collaborative — students demonstrate understanding

- Sustainable as assessment scales

Detection-based approaches degrade as AI improves. Design-based approaches do not.

This is what integrity by design looks like.

Addressing the concerns

The concerns are legitimate. The evidence addresses each one.

What about student anxiety?

What about examiner bias?

What about equity and accessibility?

Can it scale?

What this means for your institution

The evidence points to clear changes in how assessment should be designed, supported and communicated.

Assessment Strategy

Embed oral assessment where verification matters most.

- Prioritise high-stakes and high-risk modules

- Extend beyond doctoral and clinical contexts

- Align use with outcomes that require explanation

Integrity Frameworks

Shift from detection to verification.

- Use oral assessment as a complementary integrity layer

- Target modules vulnerable to outsourcing

- Reduce reliance on text-matching as the primary response

Staff Development

Design for reliability, not improvisation.

- Train staff in structured questioning

- Standardise rubric use and marking criteria

- Support bias-aware assessment practice

Student Communication

Clarity determines acceptance.

- Explain purpose before deployment

- Frame it as learning, not surveillance

- Use consistent messaging across modules

Inclusive Design

Build accessibility into the model from the outset.

- Offer alternative response formats where needed

- Provide clear expectations and preparation time

- Train examiners for adaptive delivery

Access the full research report.

Includes full methodology, cited evidence and detailed analysis for institutional review.

- Systematic review of 2,657+ studies on oral assessment outcomes

- Validity, reliability and academic integrity analysis

- Practical implementation guidance for faculty and registry teams

From research to practice.

How institutions are introducing oral assessment and what early outcomes are emerging.

Cardiff University, UK

Research-intensive university piloting structured oral assessment within postgraduate modules, alongside existing coursework, to strengthen verification of student understanding in an AI-influenced environment.

- Early pilots indicate improved clarity in student reasoning during assessment

- Academics report increased confidence in distinguishing genuine understanding from AI-assisted output

- Students highlight greater transparency in how their work is evaluated

when assessing student understanding Expanded case study currently in development

Lindenwood University, USA

Private university integrating structured oral assessment into undergraduate coursework to support student articulation and strengthen assessment integrity alongside written submissions.

- Increased student engagement during assessment processes

- Improved visibility into individual student understanding

- Faculty exploring integration across additional modules

London South Bank University, UK

Public university exploring oral assessment within applied coursework modules, focusing on real-time explanation and alignment with professional skill development.

- Strong alignment with employability-focused assessment models

- Students report increased confidence in explaining their work

- Ongoing evaluation of impact across larger cohorts

Cross-module rollout, UK

Early institutional exploration of structured oral assessment across multiple module types, focusing on scalable deployment, staff confidence and student communication.

- Assessment teams testing rollout beyond isolated pilots

- Staff evaluating consistency and delivery at scale

- Student-facing communication being refined alongside implementation

Further reading and evidence base

The primary sources underpinning this report, curated for university decision makers.

Systematic Reviews

The validity, reliability, academic integrity and integration of oral assessments in higher education

Nallaya et al. · Issues in Educational Research · 2024

Systematic review of 2,657 studies showing oral assessment outcomes depend on design, but offer strong gains in validity, integrity and deeper learning.

Structured viva validity, reliability, and acceptability as an assessment tool in health professions education

Sychanturi et al. · BMC Medical Education · 2023

Structured oral formats achieve high validity, reliability and acceptability when supported by rubrics, moderation and consistent examiner practice.

How common is commercial contract cheating in higher education and is it increasing?

Bretag et al. · Frontiers in Education · 2018

Meta-analysis across 65 studies finds contract cheating prevalence rises sharply in recent samples, underscoring systemic integrity risk.

Integrity & AI

Researchers fool university markers with AI-generated exam papers

The Guardian · 2024

University of Reading markers identified only one AI-generated submission out of 33, exposing the limits of text-based detection.

Academic integrity and the safety of UK degrees

Newton · University of Wolverhampton · 2023

Argues that 8–9% of UK degrees may be compromised by contract cheating, reframing integrity as a strategic institutional issue.

The oral examination (viva)

University of Cambridge · 2024

Sets out authorship assurance and verification as core functions of the doctoral viva, reinforcing oral defence as an integrity mechanism.

Sector Guidance

Responding to feedback from students: Guidance about providing feedback and assessment

QAA · 2023

Recognises oral assessment and presentation as legitimate assessment modes within mainstream university quality practice.

Resources for PGR students to assist viva preparation

QAA · 2023

Shows how sector guidance already frames oral defence as a normal, supported part of rigorous academic assessment.

Oral assessment methods

UNSW Teaching Gateway · 2024

Provides design guidance for oral assessment as structured evaluative conversation, aligning practice with learning outcomes and student support.

Innovation & Scalability

AI-supported viva application in physiology education

American Physiological Society · 2024

Reports no significant difference in marks versus traditional vivas, suggesting technology-supported oral assessment can preserve academic judgement.

Oral assessments enhanced by generative AI: Scalability and student engagement

ASCILITE Conference Proceedings · 2024

Shows how AI-assisted question generation and structured delivery can remove the historic scalability bottleneck in oral assessment.